|

Educator's Corner: Richard Wiener, University of Colorado, Colorado Springs, U.S.A. |

COLUMN PDF Version |

I shall wear the hat of columnist from time-to-time. This series of columns is aimed at fellow educators both within and outside the University. Although the subject area addressed by the column may not be directly involved with object technology, an object-oriented approach to problem solving will be featured.

This first column demonstrates how one might wish to introduce the subject of heuristics in teaching algorithm design. To stimulate student interest in this subject, I have chosen a problem familiar to most students – the game of MasterMind™ (registered trademark of Pressman Toy Company). Master Mind was invented in 1970-71 by Mordecai Meirowitz, an Israeli Postmaster. Over 55 million games have been sold worldwide since its release in 1972.

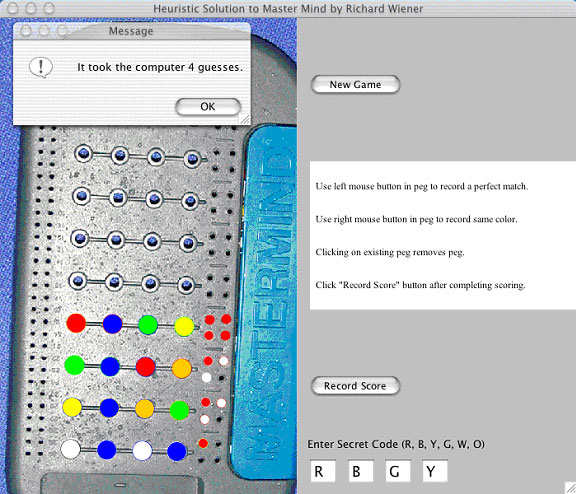

The challenge is to design an algorithm that forms the basis of an application program that allows the computer to guess the human user’s secret code with the fewest number of guesses. In this case the application program shall be constructed using Java 1.3.1 running on an Apple Macintosh under OS X. Since this is a new platform for me (most of my work has been done on a Windows 2000 platform) I shall comment, whenever appropriate, on the tools available under this platform.

1 A REVIEW OF MASTER-MIND

The user must first construct a secret code consisting of a sequence of four colors chosen from red, blue, yellow, green, white and orange. In our context, the application program (using the heuristic algorithm) must “guess” the user’s code through a series of steps. At each step, the program produces a 4-tuple of colors. The user must then input a score associated with the program’s 4-tuple. The scoring is performed as follows: for each color in the 4-tuple the program produces that is identical in color and position to the user’s secret code, the user uses a red peg to score that hit. For each color the program produces that is the same as one of the user’s colors but is not in the correct position, the user uses a white peg to score that hit. Once the user completes the scoring of the program’s 4-tuple, the program produces a new 4-tuple which the user then scores. This process continues until the program produces a 4-tuple that exactly matches the user’s secret code in both colors and positions. In this final case, the user uses 4 red pegs to indicate that the application program has succeeded in “guessing” the secret code.

“Guessing” the user’s secret code when playing the actual game by hand is non-trivial, since there are 1296 possible 4-tuples that can form the basis for the secret code. In 1993, Kenji Koyama and Tony W. Lai calculated that the best strategy uses an average of 4.340 moves.

2 THE HEURISTIC ALGORITHM

The heuristic algorithm that shall be used in the application program appears on the website of Radu Rosu (http://www.unc.edu/~radu/mm/MMS.html).

After an arbitrary initial guess, the algorithm produces a random series of 4-tuples until the first is found that is consistent with all the user’s previous scores. So instead of utilizing deep analysis and mathematics, the algorithm uses total randomness. The simplicity of the algorithm makes it appealing. In addition, we shall see that this simple algorithm requires an average number of guesses close to 4.6, not too far away from the optimum strategy that requires an average of 4.340 guesses.

The Development Platform, relevant tools and porting Java software

As indicated earlier, the Java application that implements and demonstrates this heuristic approach to playing MasterMind™ was developed on an Apple Macintosh under OS X. JBuilder 6 Enterprise Version was used as the main development tool both for project management and to assist in the production of the GUI. As is known from my review of JBuilder 6 that appeared in the previous issue of JOT (co-authored with Dave Neuendorf), I believe that this Java development tool is of superb quality. It works exactly the same under OS X as under Windows.

To port an earlier version of this application from my PC to the Macintosh, I utilized an important tool, Dave 3.1.1 manufactured by Thursby Software Systems – www.thursby.com. This tool provides complete connectivity between the Apple Macintosh (the new kid on my block) and my existing PC local area network. Without this tool the value of the Macintosh would be significantly reduced.

Any claim that Java software runs “as-is” on all platforms is not true. After porting the Window’s version to the Macintosh, it was quickly evident that many of the labels above and on buttons did not fit properly. The default fonts used on each platform are different. Fortunately, this is the only area that required fine tuning.

3 THE APPLICATION

The application is modeled using 5 classes:

- MasterMindApplication: The usual “main driver” found in many GUI applications.

- MasterMindPanel: An extension of the standard JPanel component. This class is used to hold the game image and supports the graphical images of pegs for scoring and playing.

- Row: This class forms the model of a 4-tuple of color objects. Random row objects can be created and their scores computed.

- MasterMindUI The usual user interface class that contains a MasterMindPanel object as well as the game control buttons and output messages.

- Global: Contains and supports a globally accessible random number object.

Listing 1 contains the details of class Row.

Listing 1 – Class Row

Listing 2 presents some of the details of class MasterMindPanel.

Listing 2 – Class MasterMindPanel

The constructor handles the task of downloading the image from a .gif file. This .gif file was produced by taking a digital photo of the real game. The points defined in the peg and pin arrays were obtained tediously by hand since the digital image was off-center. The paintComponent method is automatically activated whenever the GUI requires refreshing such as in response to a resize event or re-validate event. As is typical in Java, graphics-based messages are transmitted through a Graphics object .

Listing 3 presents some of the details of class MasterMindUI. All the event handlers are present in this class including the logic for the decision about which pin hole the user has selected using either a left or right mouse click.

Listing 3 – Class MasterMindUI

The this_mouseClicked event handler method considers the user to have hit a scoring hole if the mouse click is within plus or minus 3 pixels of the center.

The heuristic algorithm that is at the heart of the application is shown in the private enterScore method. The pertinent code is shown in boldface.

Listing 4 shows the details of class Global that makes a random number object globally accessible.

Listing 4 – Class Global

4 RUNNING THE APPLICATION ON A MACINTOSH

The OS X environment on the Macintosh makes it easy to create “clickable” applications. A tool called MRJAppBuilder may be used to create a native Macintosh application. Its wizard walks the user through the appropriate steps. Another approach, and one that I prefer, is to create an application .jar file that is clickable. To make the .jar file clickable, one needs to create a manifest.txt file and use it while constructing the .jar file for the application.

The manifest.txt file needed to make the application clickable contains the one line,

Main-Class: MasterMindApplication

From an OS X shell opened to the sub-directory containing the application files (the ability for a Macintosh programmer to have access to a standard command shell is a relatively new event in the history of Apple Computers – one that is long overdue and greatly appreciated), the application is compiled using the usual

javac *.java command

Next the clickable .jar file is produced from the command:

jar –cvfm MasterMind.jar manifest.txt *.class

The MasterMind.jar file can then be renamed simply MasterMind and double clicked to launch it.

A screen shot of the application while running is:

5 SOME STATISTICS ON THE HEURISTIC ALGORITHM

It is interesting to examine the efficiency and relative performance of this heuristic algorithm by producing statistics that output:

- The average number of random 4-tuples required as a function of board position before one is accepted.

- The average number of “guesses” that the algorithm requires.

To accomplish this we generate all possible 64 = 1296 4-tuples as secret codes and for each determine the number of guesses required for each board position and the total number of rows required (guesses required) before a solution is reached. We output the average results over the 1296 games that are simulated.

Listing 5 presents the details of class MasterMindStats.

Listing 5 – Class MasterMindStats

Listing 6 shows the support class TimeInterval.

Listing 6 – Class TimeInterval

The output for a typical run is:

Elapsed time: 12.858 seconds.

Average number of guesses: 4.639

Average Number 4-tuples Generated[1] = 11.434

Average Number 4-tuples Generated[2] = 87.522

Average Number 4-tuples Generated[3] = 430.57

Average Number 4-tuples Generated[4] = 530.5

Average Number 4-tuples Generated[5] = 158.288

Average Number 4-tuples Generated[6] = 12.807

Average Number 4-tuples Generated[7] = 0

Average Number 4-tuples Generated[8] = 0

Complete Java sources are available for download at http://www.jot.fm/issues/issue_2002_07/column6/Mastermind.zip

About the author

|

Richard Wiener is Associate Professor of Computer Science at the University of Colorado at Colorado Springs. He is also the Editor-in-Chief of JOT and former Editor-in-Chief of the Journal of Object Oriented Programming. In addition to University work, Dr. Wiener has authored or co-authored 21 books and works actively as a consultant and software contractor whenever the possibility arises. |

Cite this column as follows: Richard Wiener: "Educator’s Corner: An OO Application that introduces Heuristic Algorithm Design", in Journal of Object Technology, vol. 1, no. 2, July-August 02, pp. 63-78. http://www.jot.fm/issues/issue_2002_07/column6